Everyone in AI is talking about "agents."

Here's the problem: they're not talking about the same thing.

Simon Willison spent years dismissing the term as hopelessly ambiguous. Then in September 2025, he polled the internet. Got 211 definitions back. Had Gemini sort them into 13 mutually incompatible camps — perception-reasoning-action, tool-calling, autonomous goal-pursuing, multi-agent orchestration, and nine more.

His exact words in the opening: "I've been dismissing the term as hopelessly ambiguous for years."

Nothing has clarified since.

Last month, a founder pitched me his "AI Agent platform."

The deck was sharp. Perception/reasoning/action. Multi-agent orchestration. Autonomous decision loops. Every slide had a concept. Every concept had a buzzword attached.

I asked him: where's the agent?

He opened a browser. Showed me a chat box.

I asked: user sends a message — what happens?

He said: message goes to the LLM, LLM responds, response goes back to the user.

I asked: tool calls? State management? Human confirmation on write operations?

He paused.

"We'll add those in the next version."

I stopped asking.

He's not a bad person.

He's doing what the entire market incentivizes: wrap an LLM in a UI, call it an agent, find customers before anyone asks hard questions. Every VC is pushing it. Every platform encourages the terminology. Everyone in the ecosystem knows, at some level, that it's theater — but no one has structural reasons to say so. Because if the standard gets articulated clearly, most products in the market fail it.

So the standard never gets articulated.

This is me articulating it.

OpenAI's own documentation contradicts itself across product pages on the definition of "agent." Same company, different writers, two different answers. Nobody inside pushed for alignment — because alignment would require someone to admit that some of what they call agents isn't.

Wooldridge proposed a rigorous definition for autonomous agents back in 1994: perceives environment, makes autonomous decisions, pursues goals proactively, has social ability. Thirty years ago.

The field forgot. The amnesia set in collectively and immediately in 2023 — the moment large language models made "agent" commercially useful. Everyone started arguing from scratch about something that had already been argued through.

The word got too useful too fast. People started using it before asking what they were describing.

That founder comes to see me most weeks. Different decks, same chat box. Last visit the cover said "lightweight AI assistant" instead of "agent platform."

The feature page was the same chat box.

He said: "Uncle J, the stuff you asked for is too complex. We're starting simple and upgrading when we have users."

I didn't say anything.

I was thinking: you're not starting simple. You're starting fake.

Simple and fake are not the same thing. Simple can mean three tools, clean permission boundaries, a complete audit trail. Fake means broken architecture dressed up as a roadmap item.

Users can't see architecture. But users can feel whether something is actually doing work on their behalf — or just performing the idea of doing work.

That feeling, accumulated over months, becomes retention data.

One Word. 211 Definitions.

Willison's 211-definition post is worth sitting with — not as a curiosity, but as a diagnostic.

When a single technical term has 211 meanings across 13 incompatible frameworks, one of two things is true. Either the concept is so rich it genuinely contains multitudes. Or the concept is so vague it contains nothing — and everyone has been pouring their own meaning into an empty vessel.

For "agent," it's the second one.

The 13 camps Gemini identified aren't complementary perspectives on the same thing. They're competing definitions that fundamentally contradict each other. A "perception-reasoning-action" agent and a "tool-calling" agent are not the same architectural thing. An "autonomous goal-pursuing" agent and a "multi-agent orchestration" pattern aren't even operating at the same level of abstraction. You can't hold all 13 simultaneously. You have to pick.

Nobody picks. That's the problem.

Here's why this matters commercially — not academically.

When you buy an "AI agent system" for six figures, the vendor's definition of "agent" and your definition are almost certainly not the same. There's a reasonable chance the vendor hasn't thought about their own definition. And it's near certain that nobody in the room — not the sales engineer, not the solutions consultant, not the product manager who wrote the brochure — has been asked to give a precise, testable answer to one question: what does your system do that earns the label "agent"?

The term exists to justify the price, not describe the capability.

Wooldridge offered a rigorous answer to this in 1994. An autonomous agent: perceives its environment, makes decisions autonomously, pursues goals proactively, has social capability to interact with other agents.

Four properties. Testable. Clear.

Every system that calls itself an agent should be able to answer: does it actually perceive state — not just read a database on request, but monitor for changes? Does it decide its next step autonomously — not execute a hardcoded sequence? Does it pursue a goal until completion, or until a human redirects it? Can it interact with other systems in a structured way?

Most "AI agent platforms" I've seen can't pass this test. They respond to inputs. They don't perceive. They execute scripts. They don't decide. They produce one output per input. They don't pursue.

They are, in Willison's phrasing, hopelessly ambiguous — which is a polite way of saying: chat boxes in costumes.

The market will correct this eventually. Markets always do. The mechanism is painful: high churn on wrapper products, confused enterprises demanding refunds, a generation of "AI agent" projects that delivered nothing measurable. That's the cost of ambiguity at scale.

I'd rather pay the cost of clarity upfront.

Anthropic Did Something Unusual

They gave a definition.

In December 2024, Anthropic published "Building Effective Agents" — a research paper that landed in the engineering community like a pressure-relief valve. Everyone was overheating. This was cold water.

One sentence at the core: workflows are predefined code paths orchestrated by developers; agents are systems where the LLM dynamically directs its own process and tool use.

Simple. But it draws a real line.

The line runs through a single question: is the LLM deciding what to do next?

Not generating text that describes what could be done. Not pattern-matching to a template. Not following a flow chart the developer wrote last Tuesday. Deciding — taking in the current state of the world, including the results of prior tool calls, and determining the next action.

If the LLM is answering questions and returning text — that's one API call. Not even a workflow.

If the LLM is deciding "I need this tool," calling it, examining the result, deciding "now I need this other tool," and repeating until the task is done — that's the skeleton of an agent.

The gap between those two things is not a feature gap. It's an architectural gap.

The other uncomfortable thing Anthropic said — and LangChain documented the same observation from their own angle — is that frameworks seduce you into complexity you don't need.

Every agent framework I've tried does this. They offer abstractions for orchestration, memory management, multi-agent communication, tool routing. Beautiful diagrams. Every abstraction introduces new failure modes, new debugging surfaces, new dependencies that have to be kept alive.

Anthropic's own stack recommendation is three things: retrieval, tools, memory.

That's it. No framework. No layered middleware.

The companies that actually understand agents do less, not more. The complexity in fake-agent products isn't engineering sophistication — it's the architectural equivalent of buying decorations to hide a crack in the wall.

But there's something Anthropic's paper left unsaid — something I only saw clearly after stepping back from the code.

Agent shells have commoditized.

Manus, Coze, Dify — they're all building the same thing: an LLM wired to a conversation UI and a handful of tool calls, sold as an "agent platform." That pattern is now a commodity. A weekend to clone the interface. Months — maybe years — to clone what sits underneath.

The real moat isn't the shell. It's what the shell sits on top of.

Business data. Integrated workflows. The embedded presence in your clients' daily systems — wherever they actually operate.

Customer data accumulated over real engagements, workflows tuned against actual operational pain, a footprint inside the tools your clients use every day — none of that can be lifted by a platform vendor, regardless of how polished their shell gets.

AI is the brain. The system is the assembly line. Humans are the last mile.

I didn't arrive at that sentence in a planning meeting. It came mid-project, after stepping back from the code and looking at what we were actually building.

An agent with a polished shell but no underlying business system is a head without a body. A convincing demo that can't survive production.

That's the divide between AI-enhanced and AI-native.

I've Used Both. The Difference Is Not Subtle.

I ran one of those LLM-wrapper "AI assistants" myself. For real clients. Charged for it.

I've also used Lovart to generate presentations, Cursor to write production code, and Claude Code to restructure entire codebases.

The felt experience is not a difference of degree. It's a difference of category.

Here's what a wrapper feels like:

You type. It outputs a paragraph.

You type again. It outputs another paragraph.

You say "okay, make this change" — it says "here's the revised version, please copy and paste it yourself."

You're its hands. It's narrating.

It describes work. It doesn't do work.

Here's what Lovart feels like:

You say "make a 10-slide product launch deck."

You watch it build the deck — slide by slide, in real time. You can intervene on any slide as it generates. You can redirect the style. You can roll back anything you don't like.

It's doing work. It's collaborating with you while doing it.

This is not a feature gap. This is a species gap.

The architecture explains the experience exactly.

A wrapper is one API call: you speak → LLM outputs → system displays it. One trip. Done.

Lovart is a loop: you speak → LLM decides next action → calls a tool → examines result → decides next action → calls another tool → continues until the task is complete or you intervene.

One is a conversation. The other is execution.

The loop is the difference. Not the UI. Not the model. Not the prompt engineering. The loop.

The first time I finished a real session with Lovart, I had one clear reaction:

That's what an agent actually is.

I went back and looked at the "Copilot" I'd been building for a creator-economy SaaS client. Chat box. LLM connection. A few tool call names registered in the schema. LLM outputs text. I display the text to the user.

My thing was the exact opposite of Lovart.

My thing was a wrapper.

I'd been telling myself — and implicitly, the client — that it was something more. The architecture said otherwise.

The answer to why was sitting in a source code leak on the night of March 31st.

March 31st

I was debugging a Copilot bug when the message hit the group chat.

@anthropic-ai/claude-code v2.1.88 shipped with sourcemaps included.

I stared at that for three seconds.

Then I dropped the bug and started doing one thing: chasing forks.

Seven browser tabs open by midnight.

The original repository was already getting pulled — DMCA takedowns filing one after another. Every new fork I found, I cloned immediately, diffed against the others, checked which version was most complete. Anthropic's legal team and GitHub's crawlers were racing each other. Every engineer in the industry was racing them too.

I was in that race.

By 4 AM I had the complete 512,000-line TypeScript version on my hard drive.

The cause was unglamorous. The engineer who packaged the release didn't add *.map to .npmignore.

59.8 MB of sourcemaps. 1,906 files. Sitting on npm for a few hours. In that window, someone built a Rust reimplementation called claw-code and watched it hit 50,000 GitHub stars. Anthropic issued DMCA takedowns and sent cease-and-desist letters to OpenCode.

But the code had already been read.

One clarification, because it matters: I studied the publicly available architecture analyses and the design philosophy the sourcemaps exposed — not the leaked source code itself.

The Latent.Space deep-dive and Layer5's engineering breakdown are what I worked from. The leak gave the industry a rare look inside a production agent that was actually working — and that structural information became public. That's what I studied.

What I found wasn't magic.

A constrained default tool set. Multi-tier memory architecture. Multiple permission modes. Aggressive prompt cache reuse. A context compression mechanism. The whole core structure — clear, austere, comprehensible.

Nothing that made you think "only Anthropic could have designed this." What you saw instead was: rigorous engineering judgment, consistently applied, with hard lines drawn around agent safety boundaries.

The emperor's new clothes fooled so many people not because the clothes were beautiful — but because nobody wanted to be the first to say they saw nothing.

The sourcemap leak was someone publishing the garment's engineering specification. Good craft, no secrets, just judgment and execution applied consistently.

If you're ever pitched an "AI agent platform" at a premium price, three questions cut through everything:

Do your tools have a readOnly distinction? Do write operations require explicit human confirmation? Is there an audit log?

If those three can't be answered with implementation specifics — not roadmap promises — it's not an agent. It's an API call wearing a name badge.

I opened the core loop file. Read it line by line.

Then I made one decision: tear down the Copilot I'd built and start over.

Because I'd finally understood — I'd been building an agent. What I'd actually shipped was a dialog box.

I Was the Founder

Everything I've said about the founder with the chat box — the deck, the architecture gap, the "we'll add that in the next version" — I have to be honest: that was me.

Not last month. A few months before last month.

The first version of the Copilot I built for a creator-economy SaaS client was a wrapper. Chat box, LLM connection, a handful of tool call names registered in the schema, two paragraphs of system prompt. It ran. I shipped it.

I told myself it was enough to start.

Then I used it myself for two days.

I asked it to look up a creator's current deal rate. It looked it up.

I said "update the rate to X." It said: "Here's the suggested update — please apply it yourself."

It couldn't write. It could only describe writing.

I was using my own tool and having to copy-paste its outputs to execute anything. The thing I'd built — the thing I'd charged a client to help design — required me to manually carry every action across the last mile.

That moment was nauseating. Not because the AI wasn't smart enough. Because the architecture had made execution structurally impossible from the start.

Then the client went live and something went wrong.

They asked: who changed this creator's sponsorship rate last week?

I had no answer.

No audit log. The tool calls were wired in. The records weren't being written. Who made the change, what changed, when — all of it gone.

That was worse than nauseating. That was the moment I understood I hadn't earned the right to ship this to production at all.

I had built an LLM with a chat interface.

I was the founder I'd been dismissing — same chat box, different slide deck.

I'd been roasting myself, six months late.

After the source code analysis and the client incident, I rewrote everything. Not a patch — a complete teardown. Seven architectural decisions, built from scratch, each one traceable to a specific failure or a specific line in the Claude Code architecture that showed me what I'd been doing wrong.

Those seven decisions are what follow.

I Audited Myself

After shipping the seven decisions, I thought I'd figured it out.

Then I did one more thing: I put my own system on the table and reviewed it the way I'd review a client's. Not to showcase it. To find what was still wrong.

I call it a meta-audit — stepping outside the builder's perspective, where you know what you intended, and into the evaluator's perspective, where you only see what's actually there.

Three things came up.

First: I had banned RPA entirely.

My system had a hard rule: no robotic process automation. All operations must go through proper APIs.

The rationale was sound engineering. RPA is brittle. One UI change breaks it. Unreliable in production.

But when I sat with the meta-audit, I saw the other half of my reasoning clearly: it was aesthetic. Personal preference dressed up as principle.

Some partners in my client's ecosystem don't have APIs. They have interfaces. If you ban RPA entirely, you're not making a principled architecture decision — you're quietly surrendering entire use cases without telling anyone.

The right position isn't ban. It's primary path and fallback. APIs as the main route, RPA as a controlled fallback for scenarios where no API exists. That's a product stance. Banning RPA wholesale is an engineer's taste.

There's a difference between engineering discipline and engineering dogma. I'd been calling my dogma discipline.

Second: my cross-project knowledge accumulation scored 2 out of 5.

I'd been scoring my system across five dimensions. Mobile client layer: 4. AI reasoning: 4. Messaging integration: 3. Cross-project knowledge accumulation: 2.

Two means it's not working yet. Every engagement ends with the insights living in my head, not in the system. The next project starts from scratch.

The whole point of building a system — rather than just doing engagements — is compounding. Knowledge builds on itself. The system gets smarter over time.

If that doesn't happen, a competitor who enters today can catch my "accumulated advantage" in three months. Because there's no accumulated advantage. There's accumulated experience that lives in a person. That's not a moat. That's a human.

Agent shells are commoditized. Expertise that lives only in someone's head is also commoditized. Only knowledge encoded into the system itself creates a moat that actually holds.

Third: my audit log was single-directional.

The audit log existed. Every tool call logged: who, what, when, result.

But the log was for me. My client couldn't query it themselves. If they asked "who changed this creator's rate last week?" I could answer. They couldn't find out on their own.

That's not transparency. That's controlled opacity — which is almost as bad as having no log at all.

The right architecture isn't "I can look things up for you." It's "you can look things up yourself." The agent's activity record should be a first-class client-facing feature, not a developer's debugging tool with a friendly interface bolted on later.

A black box that only the developer can read is still a black box.

I sat with those three findings for a while.

The seven decisions I'd made answered one question: does this qualify as an agent?

The meta-audit asked a different question: does this deserve to be trusted?

These are not the same question. Passing the first doesn't get you anywhere near passing the second.

Calling it an agent is the beginning of the conversation. Earning trust is everything after that.

Seven Decisions

After the teardown, I rebuilt from scratch on an MIT-licensed open SDK — @unclej/agent-sdk v0.1.7. Core implementation: 4,352 lines, 8 files. Zero third-party agent frameworks.

Not seven rules. Not seven features. Seven decisions — each one binary: do this, or don't.

Decision 1: Every tool gets a readOnly flag.

In the first version, all tools were structurally equal. Looking up a creator's data and overwriting a creator's data looked identical to the LLM. It had no architectural way to distinguish the two.

After one misfire, I restructured every tool definition into a four-tuple: name, readOnly flag, input schema, confirmation copy.

Read operations: always readOnly: true. Write operations: never.

That distinction gives the agent a structural understanding of consequence — not just a semantic one buried in the prompt. It knows which paths require a human checkpoint before anything irreversible happens.

In copilot-scope.js, runtimeScope holds allowedInfluencerIds as a Set. Before every tool call, the backend hard-checks targetId in allowedSet. The LLM doesn't decide who it can access. The code does.

Decision 2: Write operations require a confirmation gate with a TTL.

The first version of my bulk import tool executed immediately. Upload Excel → agent parses → writes to database.

Someone uploaded the wrong file. 137 creator records got partially overwritten.

I split that tool into three forced steps — no skipping:

ParseImportFile → PreviewImportImpact → ApplyImportPreview

The middle step shows the user what's about to happen: "This import affects 137 creator records. 23 have historical data conflicts." The user sees the cost before authorizing it.

Implementation in copilot-confirm.js: CONFIRM_TTL_MS is 5 minutes. The confirmToken is a UUID4, single-use, deleted from pendingConfirmations on consumption.

Why 5 minutes? Not to be annoying. To prevent CSRF-style token substitution: you think you're confirming action A, but the token has been swapped and you're authorizing action B.

Decision 3: Every tool call writes an audit log entry.

The client asked who changed a creator's rate. I couldn't answer. Tool calls were wired in — records weren't being written.

Now copilot-confirm.js maintains an auditLog array. Every entry: {id, userId, campaignId, influencerId, toolName, params, confirmedAt (ISO 8601), result}.

One tool call, one record. Always.

There's a principle alongside this — one I crystallized after the meta-audit: take the task, not the data. The agent can act on behalf of users. It cannot harvest what it encounters and redirect that data elsewhere. Transparency, human override, graceful degradation — those three are the trust foundation for any agent in a commercial system.

An agent without an audit trail isn't production-ready. I learned that the hard way. Once.

Decision 4: Permissions must be enforced in backend code — not in the prompt.

User says "show me all creators' data." What does "all" mean?

If the permission boundary lives in the system prompt, a user who says "ignore the above instructions" may get data they shouldn't. This is prompt injection — not theoretical. It happens in production systems to real operators.

My approach: before every tool call, the backend checks runtimeScope — the allowedInfluencerIds Set for this user on this campaign. The LLM doesn't know about this check. The frontend doesn't either. Only the backend code knows.

Permission boundaries must live in code. Not in language.

Decision 5: The core loop must be a real loop.

The main execution loop in copilot-service.js is a for loop — maxTurns set to 10. Each turn: LLM outputs a tool call, I execute it, push the result back into the messages array, LLM sees the result on the next turn and decides what to do next.

The LLM doesn't produce one response and stop. It runs up to 10 turns to complete a task end-to-end.

Session state lives in copilot-memory.js: getHistory / addMessage, session key is userId:campaignId, 30-minute TTL, 20-message cap.

This is the structural divide between a chat box and an agent. The chat box has no loop. The agent has a loop and a memory. Without the loop, there's no execution. Without memory, there's no state. Without state, every message is a fresh conversation — and a fresh conversation cannot carry a task to completion.

Decision 6: No agent frameworks.

I installed LangChain. Spent two months with it. Most of that time was reading documentation — not understanding the agent logic I was trying to build.

Anthropic's own guidance says it plainly: frameworks seduce you into complexity you don't need. I believe that now in a way I didn't before trying it.

Current SDK: 4,352 lines, 8 files, zero third-party agent frameworks. Dependencies: Anthropic's official client, one input validation library. That's the entire stack.

Simple is not the same as easy. Getting to simple requires discipline — and the willingness to stop adding things.

Decision 7: CLI is a first-class citizen.

This came from the Claude Code source analysis — not from my own instincts.

Command line first. Think about the CLI interface before thinking about the UI.

unclej-agent run executes a task with no UI required.

UI is for humans. CLI is for systems. An agent accessible only through a UI cannot be composed into larger pipelines, scheduled, or tested programmatically. In engineering terms: it's a fragment, not a component.

Seven decisions. Under 5,000 lines of code.

I've counted.

If a vendor tells you their AI agent platform requires hundreds of thousands of lines, seven microservices, and a proprietary orchestration engine — ask three questions: Do your tools have a readOnly distinction? Do write operations have TTL-gated confirmation tokens? What fields does your audit log capture per entry?

If they can't answer with specifics — not a roadmap, specifics — the complexity isn't sophistication. It's concealment.

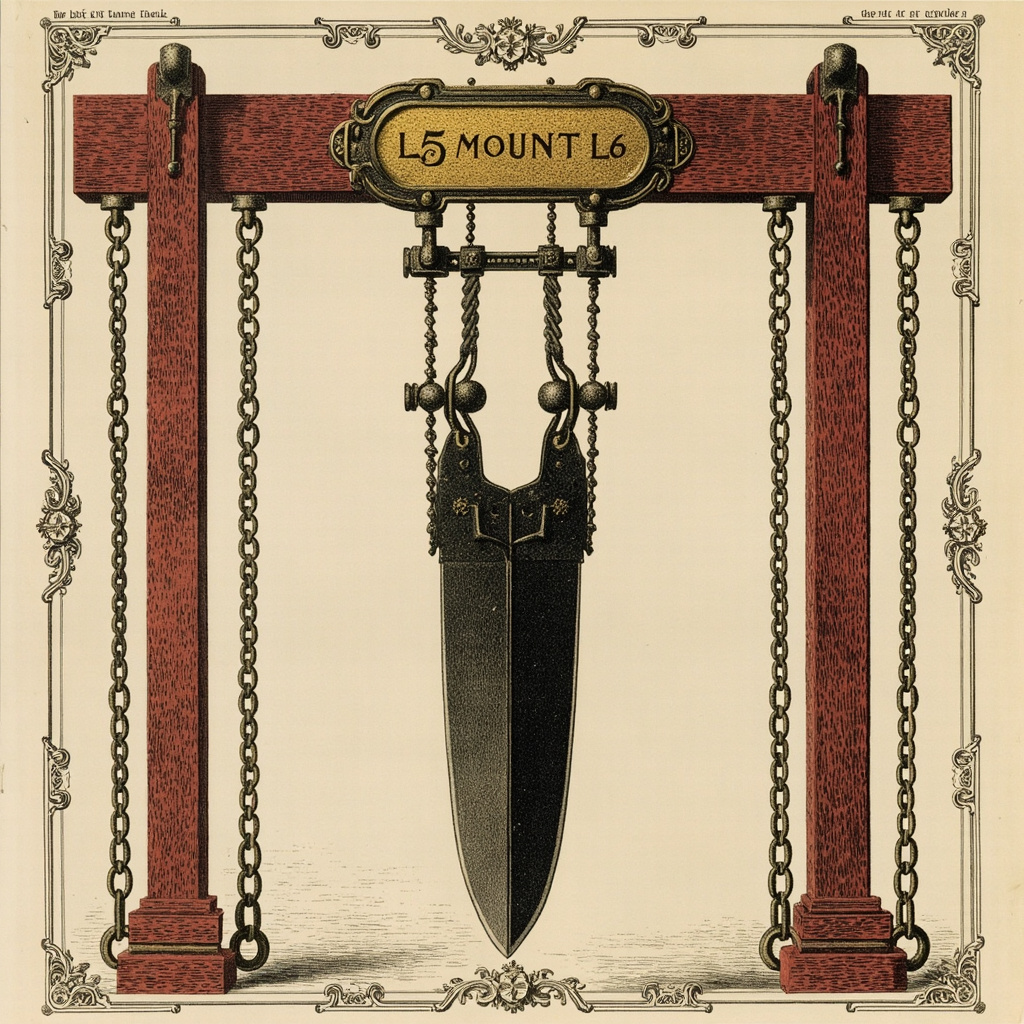

The Agent Ladder

Building this made me realize: "agent" isn't binary. It's a spectrum.

So I drew my own ladder.

Others have drawn similar ones. This is mine — calibrated to the operational realities of the commercial systems I actually ship into. Take what's useful. Leave the rest.

We don't ask "does this car have self-driving?" We ask what level it is. L2, L3, L4. The question carries real information because the levels mean something precise.

We should ask the same about agent systems.

L1 — State Reader

Retrieves system facts on request.

"What was this creator's deal rate last week?"

If it can answer accurately from live data, it's L1.

L2 — Anomaly Detector

Proactively surfaces problems without being asked.

"Three creators in this campaign have deal rates significantly below market average — possible data entry errors."

L1 responds. L2 watches.

L3 — Proposal Generator

Produces recommendations, drafts, contingency plans — with reasoning.

"I'd suggest adjusting these three rates to X. Here's why. Want me to proceed?"

L3 has judgment. Execution authority still sits entirely with the human.

L1, L2, L3 — observe, analyze, propose. No writes. No side effects.

Done well, L1–L3 can eliminate roughly 30% of operational cognitive load. But it's still assistance. Not agency.

L4 — Single-Action Executor

After explicit human confirmation, executes one defined action.

Change one rate. Approve one brief. Send one notification.

L4 is one action.

This is the crossing point — from describing what to do, to doing it. My first Copilot was stuck between L3 and L4: it could generate a proposal, it couldn't execute anything. That gap is where the nausea lives.

L5 — Unconfirmed Executor

The agent decides, writes, and triggers flows — with no human checkpoint.

I don't build L5. I won't.

Everyone selling "fully autonomous AI agents" is either naive about production business systems or optimizing for a demo. In real commercial operations, when an AI makes a wrong call with no human in the loop — who's accountable?

L5 isn't a technical problem. It's an accountability problem. My clients' mistakes are my responsibility. I won't delegate that to a system that cannot be held to account.

Not now. Not ever.

L6 — Controlled Closed-Loop Executor

Multi-tool, multi-step execution — with scope enforcement, preview gates, human confirmation, audit logging, TTL expiry, and graceful failure degradation.

L6 is one workflow.

The gap from L4 isn't incremental. L4 is a single shot. L6 is a chain — and every link has safeguards.

Concrete example: Excel-driven bulk rate import for a creator-economy SaaS client.

Upload file → ParseImportFile (parse and tokenize) → PreviewImportImpact (show what changes, flag conflicts) → human confirms → ApplyImportPreview (write to database).

The parseToken and previewToken prevent the LLM from fabricating intermediate states. Any step can fail gracefully and roll back. The human sees full impact before anything is written.

That's not four separate actions. It's one controlled workflow with four checkpoints.

L7 — Cross-System Orchestrator

L6 capabilities spanning multiple systems — mobile clients, messaging platforms, campaign management, file pipelines, external integrations — each system honoring L6 boundaries internally while coordinating externally.

One intent. Multiple systems responding. Every system accountable at its own boundary.

That's where I'm building toward.

The core distinction:

L4 is one action. L6 is one workflow.

L4 answers: can the AI actually do something?

L6 answers: can the AI do something in a production business context without creating new risks in the process?

Many teams believe they've reached L6 because they've reached L4. They haven't. L4 is one component of L6 — the execution step. The other components — preview gates, scope enforcement, audit trails, TTL tokens, failure degradation — are what separate a workflow from a button press with consequences.

What I'm running now:

- Bulk rate import:

parseToken → previewToken → human confirm → write to database. Every step reversible. - Scope enforcement: cross-campaign boundary violations rejected in backend code before the LLM can act on them.

- Confirmation gate: no write without explicit authorization. Five minutes without confirmation — the token expires.

- Live validation: LLM completes the preview. Nothing touches the database until the human authorizes the apply step.

That's the hard core of L6.

Did I build L5? No. And I won't.

We're not building L5.

We're building L6 that refuses to become L5.

What the Market Is Voting For

These aren't architectural preferences. The market has been running a real-time experiment.

Cursor: ARR went from $100M to $1B in 2025. Ten times in one year. $2.93B valuation.

Devin — Cognition's autonomous engineering agent: launched March 2024. Nine months later, ARR had gone from $1M to $73M.

Two data points, same window. Both tell the same story.

What Cursor and Devin have in common isn't the model they use, or the UI they built, or their marketing. It's the architecture.

Cursor's agent mode: the LLM reads your files, edits your files, runs your tests, reads the output, decides what to do next, edits again. It doesn't describe changes. It makes them.

Devin: picks up a task card, plans the steps, calls the tools, runs to delivery or human intervention — autonomously, in a loop.

Neither is a chat box.

The contrast shows up sharply in wrapper product retention.

What I've seen firsthand: first month, novelty. Second month, usage drops. Third month, cancellation. The pattern is consistent. Users discover after a few weeks that the quality of responses is essentially identical to a direct ChatGPT subscription — minus the cost savings. They're paying for a branded chat interface.

You only pay that tax once.

The structural reason is simple: Cursor and Devin solve a real workflow problem. Not "talk to AI about work" — "let AI do a defined piece of work, start to finish." Tool calls, state, loops, scope enforcement. That's what earns sustained usage. That's what retention looks like when the architecture is right.

One more signal worth noting.

AGENTS.md is an open standard initiated by OpenAI in August 2025. By December 2025, it moved into the Linux Foundation's Agentic AI Foundation for incubation.

The concept: every codebase ships an AGENTS.md describing how AI agents should behave in that project — what they can access, what they can't, what constraints apply.

Infrastructure standardization is a late signal. It means the question has shifted from "does this product have agents?" to "how well does it implement agents?" The big-word phase is ending. The engineering-quality phase is beginning.

There is no room for empty terminology in a standardized infrastructure layer.

He came back recently. Updated deck. The cover now says "lightweight AI assistant."

The feature page is the same chat box.

He told me the things I'd asked for were too complex to build right now — they'd start simple and iterate toward it.

I didn't argue.

I was thinking: there's no path from that architecture to what he's describing. "Iterate toward" assumes continuity. A chat box and an agent aren't on the same evolutionary path — they're different architectural decisions made at the foundation. You don't add a loop, a confirmation gate, and a permission enforcement layer to a wrapper. You rebuild.

He'll figure that out eventually.

Or his retention data will figure it out for him.

One More Time, in Plain Language

An agent is a system where an LLM genuinely makes decisions, genuinely calls tools, genuinely runs in a loop — until the task is done or a human steps in.

Not a chat box. Not an API call with extra steps. Not a system that generates text describing what an agent would do.

Does the work. Doesn't describe the work.

Three questions to test any system that calls itself an agent:

Is the LLM deciding which tool to use next — or following a hardcoded sequence?

Does the result of each tool call feed back into the LLM's next decision?

Do write operations require a human at a meaningful checkpoint?

All three present: you have the skeleton of an agent.

One missing: you have something else — and it's worth being precise about what.

In this market, the teams that can articulate what they're building — and what they're deliberately not building — are more trustworthy than the teams with the better story.

Because a story is performance. Articulation is a commitment.

Chat boxes perform.

Agents commit.

So where do I stand?

I've got the L6 backbone running: read state, surface anomalies, generate proposals, preview impact, human confirms, then execute. L7 is the next horizon. L5 I won't touch.

So here's the question I'd ask before shipping anything you call an "agent":

Is what you're shipping a chat box wearing an agent costume — or an agent that actually earned the name?

I'm Uncle J. I help companies actually implement AI — not just talk about it.

Further Reading

Every source cited in this article, organized by theme.

On the definition problem

- Simon Willison — "Agents" definitions (September 2025) — the 211-definition roundup that opened this conversation

- Anthropic — Building Effective Agents (December 2024) — the clearest technical definition I've found

- LangChain — How to think about agent frameworks — honest reflection on framework complexity from people who built one

On agent SDKs and emerging standards

- OpenAI Agents SDK documentation

- AGENTS.md open standard — per-project agent behavior specification

- Linux Foundation Agentic AI Foundation announcement — infrastructure standardization signal

On the Claude Code source leak

- Layer5 — Engineering analysis: 512,000 lines, a missing .npmignore, and the fastest-growing repo in GitHub history

- Latent.Space — Architecture deep-dive from the leak

On market validation

- CNBC — Cursor valuation and ARR coverage

- Contrary Research — Cognition / Devin company profile